Why Residential Internet Traffic Is Valued Online

Websites don’t treat all internet connections equally. When traffic arrives from a home broadband connection versus a commercial server farm, the response can range from instant access to an immediate block.

This distinction affects anyone running automated data collection, testing web applications, or managing multiple online accounts. And it’s becoming more important as companies deploy increasingly sophisticated systems to analyze incoming traffic.

The technical reasons behind this preference reveal a lot about modern web architecture and how businesses protect their digital assets from unwanted automated access.

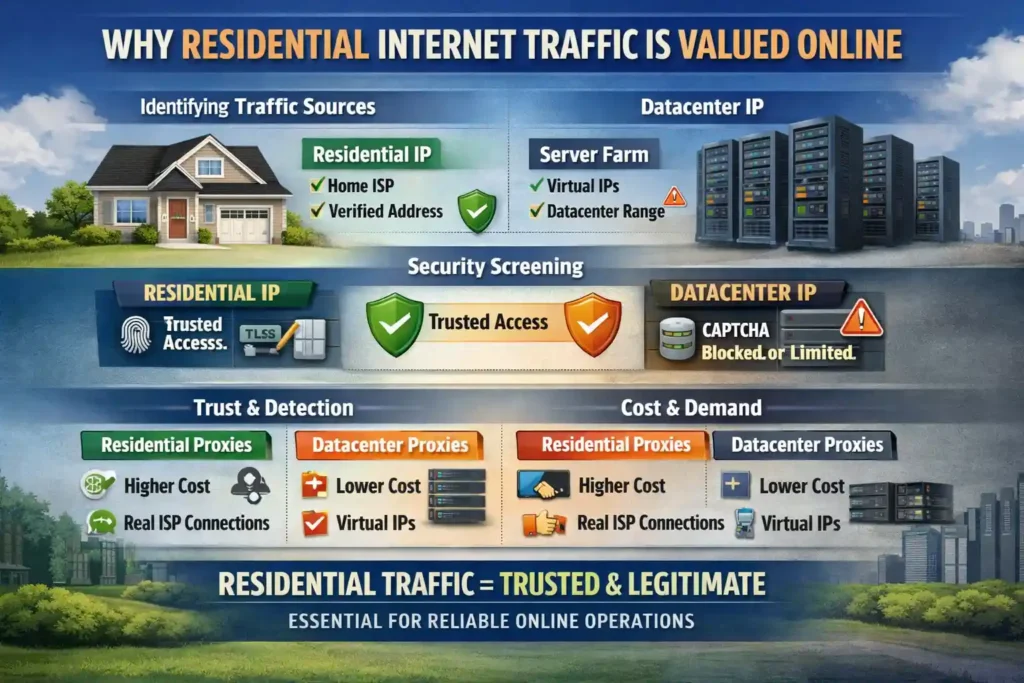

How Websites Identify Traffic Sources

Every device connecting to the internet receives an IP address from its network provider. Internet Service Providers like Comcast, Verizon, and BT assign residential IPs to home customers through standard consumer agreements. These addresses carry ISP verification, creating a digital paper trail that websites can check against public databases.

Commercial datacenter IPs work differently. Companies like Amazon Web Services, Google Cloud, and DigitalOcean generate these addresses virtually on their servers. Regional registries maintain public databases showing which address blocks belong to residential ISPs versus commercial hosting providers, making identification straightforward.

When a request hits a website’s servers, security systems run quick lookups against these registries. A connection from a verified residential IP gets treated like a normal visitor browsing from home. The same request from a known datacenter range might trigger CAPTCHA tests, rate limiting, or outright blocks. For organizations needing residential-grade connections at scale, cheap residential proxy options available here provide access to verified ISP addresses without managing physical infrastructure across multiple locations.

The Internet Assigned Numbers Authority oversees global IP allocation through these regional bodies. This hierarchical system ensures every address can be traced back to its origin, whether that’s a residential ISP serving home customers or a datacenter provisioning virtual servers.

The Trust Factor in Web Security

Bot detection has become big business. Cloudflare alone processes over 57 million requests per second across its network, using that massive dataset to build behavioral models distinguishing humans from automated scripts.

The company’s documentation on data scraping explains how scrapers can harm businesses through stolen pricing data, degraded site performance, and inflated bandwidth costs. But the detection methods go far beyond simple IP lookups.

Modern systems analyze TLS handshake patterns, HTTP/2 fingerprints, JavaScript execution behavior, and mouse movement data. Residential IPs pass the first filter automatically because they’re associated with legitimate consumer activity. Datacenter IPs start with a penalty, requiring perfect behavior across every other metric to avoid triggering blocks.

It’s a bit like airport security. Someone with a known travel history and proper documentation breezes through. A first-time visitor with unusual paperwork gets extra scrutiny, even if they’re completely legitimate.

Why Businesses Pay Premium Prices for Residential Access

The price difference tells the story. Datacenter proxies cost a fraction of residential alternatives because they’re cheap to create (one powerful server can host hundreds of virtual IPs) and unlimited bandwidth comes standard.

Residential proxies require actual devices connected to real ISP networks. That physical requirement drives costs up significantly, with most providers charging per gigabyte of data transferred rather than offering unlimited plans.

Yet businesses still pay the premium. E-commerce companies tracking competitor pricing across dozens of markets need their requests accepted without constant CAPTCHA interruptions. Market researchers collecting regional sentiment data can’t afford to get blocked halfway through a campaign.

According to Pew Research Center, 72% of Americans believe their online activity is tracked by advertisers and technology firms. Websites have responded by building defensive systems that scrutinize every incoming connection. Residential traffic passes through these defenses because it looks exactly like the legitimate visitors these systems are designed to protect.

Technical Performance Considerations

Speed and reliability also factor into the equation. Datacenter proxies typically outperform residential alternatives on raw throughput (tests show 5 to 10 times faster task completion in some scenarios). But that speed advantage disappears when requests get blocked or rate-limited.

Geographic proximity matters too. A residential proxy in Frankfurt accessing German e-commerce sites will outperform a datacenter proxy in Virginia. The round-trip latency adds up across thousands of requests.

Smart operators match their proxy selection to specific use cases. High-volume, low-risk tasks might work fine on datacenter IPs with aggressive rotation. Anything requiring sustained access to protected sites (social media management, price monitoring, account verification) typically demands residential connections.

The Bottom Line on Traffic Legitimacy

The web has evolved into a space where your connection’s origin matters as much as your actions. Websites invest heavily in distinguishing between human visitors and automated traffic because the financial stakes are real.

Residential internet traffic carries inherent trust because it originates from the same networks used by paying customers. That trust translates into access, and access is what makes online operations possible.